rancho 发表于 2015-8-2 00:27 多谢了 |

Alkaloid0515 发表于 2015-8-1 23:28 照着别人的设置弄的 |

larry 发表于 2015-8-1 22:23 有日志的都要清空 要保证是最开始没有格式化的状态才行 |

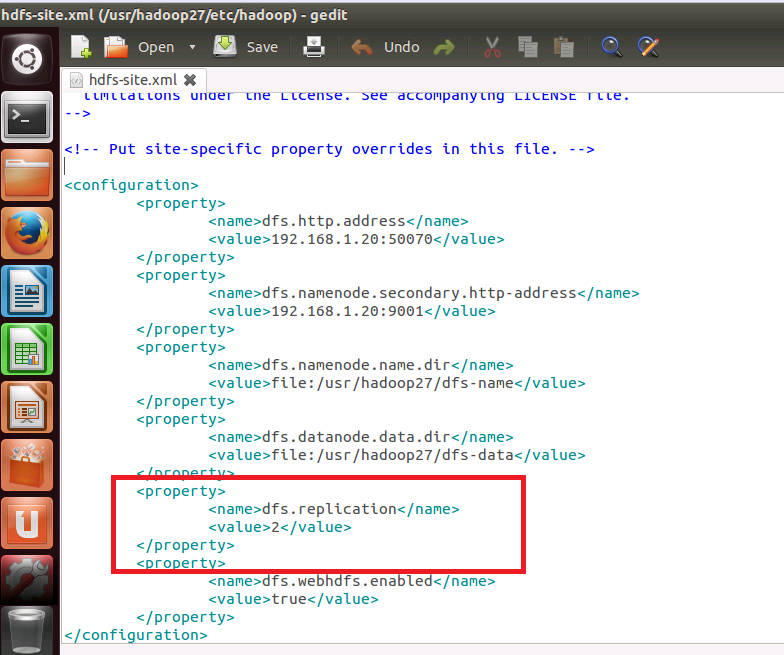

larry 发表于 2015-8-1 23:10 几个节点,这里设置为2的含义是什么?

|

|

出错后master的日志,不知是否够用,其中有两处警告,并在最后产生了错误 |

hadoop-larry-namenode-larry-master.rar

4.88 KB, 下载次数: 1

出错后master的日志

|

2015-07-30 22:32:30,799 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = larry-master/192.168.1.20 STARTUP_MSG: args = [] STARTUP_MSG: version = 2.7.1 STARTUP_MSG: classpath = /usr/hadoop27/etc/hadoop:/usr/hadoop27/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/usr/hadoop27/share/hadoop/common/lib/jsp-api-2.1.jar:/usr/hadoop27/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/usr/hadoop27/share/hadoop/common/lib/jersey-json-1.9.jar:/usr/hadoop27/share/hadoop/common/lib/jersey-core-1.9.jar:/usr/hadoop27/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/usr/hadoop27/share/hadoop/common/lib/guava-11.0.2.jar:/usr/hadoop27/share/hadoop/common/lib/commons-httpclient-3.1.jar:/usr/hadoop27/share/hadoop/common/lib/netty-3.6.2.Final.jar:/usr/hadoop27/share/hadoop/common/lib/paranamer-2.3.jar:/usr/hadoop27/share/hadoop/common/lib/hadoop-auth-2.7.1.jar:/usr/hadoop27/share/hadoop/common/lib/jsch-0.1.42.jar:/usr/hadoop27/share/hadoop/common/lib/httpclient-4.2.5.jar:/usr/hadoop27/share/hadoop/common/lib/mockito-all-1.8.5.jar:/usr/hadoop27/share/hadoop/common/lib/commons-compress-1.4.1.jar:/usr/hadoop27/share/hadoop/common/lib/hamcrest-core-1.3.jar:/usr/hadoop27/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/usr/hadoop27/share/hadoop/common/lib/servlet-api-2.5.jar:/usr/hadoop27/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/usr/hadoop27/share/hadoop/common/lib/jersey-server-1.9.jar:/usr/hadoop27/share/hadoop/common/lib/jetty-6.1.26.jar:/usr/hadoop27/share/hadoop/common/lib/asm-3.2.jar:/usr/hadoop27/share/hadoop/common/lib/stax-api-1.0-2.jar:/usr/hadoop27/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/usr/hadoop27/share/hadoop/common/lib/commons-net-3.1.jar:/usr/hadoop27/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/common/lib/commons-logging-1.1.3.jar:/usr/hadoop27/share/hadoop/common/lib/activation-1.1.jar:/usr/hadoop27/share/hadoop/common/lib/xz-1.0.jar:/usr/hadoop27/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/usr/hadoop27/share/hadoop/common/lib/commons-configuration-1.6.jar:/usr/hadoop27/share/hadoop/common/lib/curator-client-2.7.1.jar:/usr/hadoop27/share/hadoop/common/lib/zookeeper-3.4.6.jar:/usr/hadoop27/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/usr/hadoop27/share/hadoop/common/lib/hadoop-annotations-2.7.1.jar:/usr/hadoop27/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/usr/hadoop27/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/usr/hadoop27/share/hadoop/common/lib/xmlenc-0.52.jar:/usr/hadoop27/share/hadoop/common/lib/commons-lang-2.6.jar:/usr/hadoop27/share/hadoop/common/lib/commons-collections-3.2.1.jar:/usr/hadoop27/share/hadoop/common/lib/gson-2.2.4.jar:/usr/hadoop27/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/usr/hadoop27/share/hadoop/common/lib/jsr305-3.0.0.jar:/usr/hadoop27/share/hadoop/common/lib/avro-1.7.4.jar:/usr/hadoop27/share/hadoop/common/lib/log4j-1.2.17.jar:/usr/hadoop27/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/usr/hadoop27/share/hadoop/common/lib/commons-io-2.4.jar:/usr/hadoop27/share/hadoop/common/lib/commons-codec-1.4.jar:/usr/hadoop27/share/hadoop/common/lib/curator-framework-2.7.1.jar:/usr/hadoop27/share/hadoop/common/lib/commons-cli-1.2.jar:/usr/hadoop27/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/usr/hadoop27/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/usr/hadoop27/share/hadoop/common/lib/jettison-1.1.jar:/usr/hadoop27/share/hadoop/common/lib/httpcore-4.2.5.jar:/usr/hadoop27/share/hadoop/common/lib/commons-digester-1.8.jar:/usr/hadoop27/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/usr/hadoop27/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/usr/hadoop27/share/hadoop/common/lib/jetty-util-6.1.26.jar:/usr/hadoop27/share/hadoop/common/lib/jets3t-0.9.0.jar:/usr/hadoop27/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/usr/hadoop27/share/hadoop/common/lib/junit-4.11.jar:/usr/hadoop27/share/hadoop/common/lib/commons-math3-3.1.1.jar:/usr/hadoop27/share/hadoop/common/hadoop-nfs-2.7.1.jar:/usr/hadoop27/share/hadoop/common/hadoop-common-2.7.1.jar:/usr/hadoop27/share/hadoop/common/hadoop-common-2.7.1-tests.jar:/usr/hadoop27/share/hadoop/hdfs:/usr/hadoop27/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/usr/hadoop27/share/hadoop/hdfs/lib/guava-11.0.2.jar:/usr/hadoop27/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/usr/hadoop27/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/usr/hadoop27/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/usr/hadoop27/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/usr/hadoop27/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/usr/hadoop27/share/hadoop/hdfs/lib/asm-3.2.jar:/usr/hadoop27/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/usr/hadoop27/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/usr/hadoop27/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/usr/hadoop27/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/usr/hadoop27/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/usr/hadoop27/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/usr/hadoop27/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/usr/hadoop27/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/usr/hadoop27/share/hadoop/hdfs/lib/commons-io-2.4.jar:/usr/hadoop27/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/usr/hadoop27/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/usr/hadoop27/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/usr/hadoop27/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/usr/hadoop27/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/usr/hadoop27/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/usr/hadoop27/share/hadoop/hdfs/hadoop-hdfs-2.7.1.jar:/usr/hadoop27/share/hadoop/hdfs/hadoop-hdfs-2.7.1-tests.jar:/usr/hadoop27/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/lib/jersey-json-1.9.jar:/usr/hadoop27/share/hadoop/yarn/lib/jersey-core-1.9.jar:/usr/hadoop27/share/hadoop/yarn/lib/guava-11.0.2.jar:/usr/hadoop27/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/usr/hadoop27/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/usr/hadoop27/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/usr/hadoop27/share/hadoop/yarn/lib/servlet-api-2.5.jar:/usr/hadoop27/share/hadoop/yarn/lib/jersey-server-1.9.jar:/usr/hadoop27/share/hadoop/yarn/lib/jetty-6.1.26.jar:/usr/hadoop27/share/hadoop/yarn/lib/asm-3.2.jar:/usr/hadoop27/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/usr/hadoop27/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/usr/hadoop27/share/hadoop/yarn/lib/activation-1.1.jar:/usr/hadoop27/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/usr/hadoop27/share/hadoop/yarn/lib/xz-1.0.jar:/usr/hadoop27/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/usr/hadoop27/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/usr/hadoop27/share/hadoop/yarn/lib/guice-3.0.jar:/usr/hadoop27/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/usr/hadoop27/share/hadoop/yarn/lib/commons-lang-2.6.jar:/usr/hadoop27/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/usr/hadoop27/share/hadoop/yarn/lib/commons-collections-3.2.1.jar:/usr/hadoop27/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/usr/hadoop27/share/hadoop/yarn/lib/javax.inject-1.jar:/usr/hadoop27/share/hadoop/yarn/lib/log4j-1.2.17.jar:/usr/hadoop27/share/hadoop/yarn/lib/commons-io-2.4.jar:/usr/hadoop27/share/hadoop/yarn/lib/commons-codec-1.4.jar:/usr/hadoop27/share/hadoop/yarn/lib/commons-cli-1.2.jar:/usr/hadoop27/share/hadoop/yarn/lib/aopalliance-1.0.jar:/usr/hadoop27/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/usr/hadoop27/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/usr/hadoop27/share/hadoop/yarn/lib/jettison-1.1.jar:/usr/hadoop27/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/usr/hadoop27/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/usr/hadoop27/share/hadoop/yarn/lib/jersey-client-1.9.jar:/usr/hadoop27/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-registry-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-common-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-client-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-server-common-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-api-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.1.jar:/usr/hadoop27/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/asm-3.2.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/xz-1.0.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/guice-3.0.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/javax.inject-1.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/usr/hadoop27/share/hadoop/mapreduce/lib/junit-4.11.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.1.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.1-tests.jar:/usr/hadoop27/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.1.jar:/contrib/capacity-scheduler/*.jar:/usr/hadoop27/contrib/capacity-scheduler/*.jar:/usr/hadoop27/contrib/capacity-scheduler/*.jar STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r 15ecc87ccf4a0228f35af08fc56de536e6ce657a; compiled by 'jenkins' on 2015-06-29T06:04Z STARTUP_MSG: java = 1.8.0_45 ************************************************************/ 2015-07-30 22:32:30,820 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 2015-07-30 22:32:30,832 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: createNameNode [] 2015-07-30 22:32:31,599 INFO org.apache.hadoop.metrics2.impl.MetricsConfig: loaded properties from hadoop-metrics2.properties 2015-07-30 22:32:32,330 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s). 2015-07-30 22:32:32,330 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: NameNode metrics system started 2015-07-30 22:32:32,336 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: fs.defaultFS is hdfs://192.168.1.20:9000 2015-07-30 22:32:32,337 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: Clients are to use 192.168.1.20:9000 to access this namenode/service. 2015-07-30 22:32:32,959 INFO org.apache.hadoop.hdfs.DFSUtil: Starting Web-server for hdfs at: http://larry-master:50070 2015-07-30 22:32:33,471 INFO org.mortbay.log: Logging to org.slf4j.impl.Log4jLoggerAdapter(org.mortbay.log) via org.mortbay.log.Slf4jLog 2015-07-30 22:32:33,509 INFO org.apache.hadoop.security.authentication.server.AuthenticationFilter: Unable to initialize FileSignerSecretProvider, falling back to use random secrets. 2015-07-30 22:32:33,552 INFO org.apache.hadoop.http.HttpRequestLog: Http request log for http.requests.namenode is not defined 2015-07-30 22:32:33,569 INFO org.apache.hadoop.http.HttpServer2: Added global filter 'safety' (class=org.apache.hadoop.http.HttpServer2$QuotingInputFilter) 2015-07-30 22:32:33,578 INFO org.apache.hadoop.http.HttpServer2: Added filter static_user_filter (class=org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter) to context hdfs 2015-07-30 22:32:33,579 INFO org.apache.hadoop.http.HttpServer2: Added filter static_user_filter (class=org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter) to context logs 2015-07-30 22:32:33,579 INFO org.apache.hadoop.http.HttpServer2: Added filter static_user_filter (class=org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter) to context static 2015-07-30 22:32:33,742 INFO org.apache.hadoop.http.HttpServer2: Added filter 'org.apache.hadoop.hdfs.web.AuthFilter' (class=org.apache.hadoop.hdfs.web.AuthFilter) 2015-07-30 22:32:33,745 INFO org.apache.hadoop.http.HttpServer2: addJerseyResourcePackage: packageName=org.apache.hadoop.hdfs.server.namenode.web.resources;org.apache.hadoop.hdfs.web.resources, pathSpec=/webhdfs/v1/* 2015-07-30 22:32:34,226 INFO org.apache.hadoop.http.HttpServer2: Jetty bound to port 50070 2015-07-30 22:32:34,229 INFO org.mortbay.log: jetty-6.1.26 2015-07-30 22:32:35,319 INFO org.mortbay.log: Started HttpServer2$SelectChannelConnectorWithSafeStartup@larry-master:50070 2015-07-30 22:32:35,772 WARN org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Only one image storage directory (dfs.namenode.name.dir) configured. Beware of data loss due to lack of redundant storage directories! 2015-07-30 22:32:35,772 WARN org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Only one namespace edits storage directory (dfs.namenode.edits.dir) configured. Beware of data loss due to lack of redundant storage directories! 2015-07-30 22:32:35,842 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: No KeyProvider found. 2015-07-30 22:32:35,842 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: fsLock is fair:true 2015-07-30 22:32:36,068 INFO org.apache.hadoop.hdfs.server.blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000 2015-07-30 22:32:36,068 INFO org.apache.hadoop.hdfs.server.blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true 2015-07-30 22:32:36,074 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000 2015-07-30 22:32:36,099 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: The block deletion will start around 2015 Jul 30 22:32:36 2015-07-30 22:32:36,101 INFO org.apache.hadoop.util.GSet: Computing capacity for map BlocksMap 2015-07-30 22:32:36,101 INFO org.apache.hadoop.util.GSet: VM type = 64-bit 2015-07-30 22:32:36,108 INFO org.apache.hadoop.util.GSet: 2.0% max memory 966.7 MB = 19.3 MB 2015-07-30 22:32:36,108 INFO org.apache.hadoop.util.GSet: capacity = 2^21 = 2097152 entries 2015-07-30 22:32:36,328 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: dfs.block.access.token.enable=false 2015-07-30 22:32:36,331 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: defaultReplication = 2 2015-07-30 22:32:36,331 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: maxReplication = 512 2015-07-30 22:32:36,331 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: minReplication = 1 2015-07-30 22:32:36,331 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: maxReplicationStreams = 2 2015-07-30 22:32:36,331 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: shouldCheckForEnoughRacks = false 2015-07-30 22:32:36,332 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: replicationRecheckInterval = 3000 2015-07-30 22:32:36,332 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: encryptDataTransfer = false 2015-07-30 22:32:36,332 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: maxNumBlocksToLog = 1000 2015-07-30 22:32:36,370 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: fsOwner = larry (auth:SIMPLE) 2015-07-30 22:32:36,372 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: supergroup = supergroup 2015-07-30 22:32:36,372 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: isPermissionEnabled = true 2015-07-30 22:32:36,373 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: HA Enabled: false 2015-07-30 22:32:36,378 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Append Enabled: true 2015-07-30 22:32:37,567 INFO org.apache.hadoop.util.GSet: Computing capacity for map INodeMap 2015-07-30 22:32:37,567 INFO org.apache.hadoop.util.GSet: VM type = 64-bit 2015-07-30 22:32:37,568 INFO org.apache.hadoop.util.GSet: 1.0% max memory 966.7 MB = 9.7 MB 2015-07-30 22:32:37,568 INFO org.apache.hadoop.util.GSet: capacity = 2^20 = 1048576 entries 2015-07-30 22:32:37,597 INFO org.apache.hadoop.hdfs.server.namenode.FSDirectory: ACLs enabled? false 2015-07-30 22:32:37,597 INFO org.apache.hadoop.hdfs.server.namenode.FSDirectory: XAttrs enabled? true 2015-07-30 22:32:37,597 INFO org.apache.hadoop.hdfs.server.namenode.FSDirectory: Maximum size of an xattr: 16384 2015-07-30 22:32:37,597 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: Caching file names occuring more than 10 times 2015-07-30 22:32:37,628 INFO org.apache.hadoop.util.GSet: Computing capacity for map cachedBlocks 2015-07-30 22:32:37,628 INFO org.apache.hadoop.util.GSet: VM type = 64-bit 2015-07-30 22:32:37,628 INFO org.apache.hadoop.util.GSet: 0.25% max memory 966.7 MB = 2.4 MB 2015-07-30 22:32:37,628 INFO org.apache.hadoop.util.GSet: capacity = 2^18 = 262144 entries 2015-07-30 22:32:37,632 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033 2015-07-30 22:32:37,632 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0 2015-07-30 22:32:37,632 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000 2015-07-30 22:32:37,661 INFO org.apache.hadoop.hdfs.server.namenode.top.metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10 2015-07-30 22:32:37,661 INFO org.apache.hadoop.hdfs.server.namenode.top.metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10 2015-07-30 22:32:37,665 INFO org.apache.hadoop.hdfs.server.namenode.top.metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25 2015-07-30 22:32:37,672 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Retry cache on namenode is enabled 2015-07-30 22:32:37,672 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis 2015-07-30 22:32:37,696 INFO org.apache.hadoop.util.GSet: Computing capacity for map NameNodeRetryCache 2015-07-30 22:32:37,696 INFO org.apache.hadoop.util.GSet: VM type = 64-bit 2015-07-30 22:32:37,697 INFO org.apache.hadoop.util.GSet: 0.029999999329447746% max memory 966.7 MB = 297.0 KB 2015-07-30 22:32:37,697 INFO org.apache.hadoop.util.GSet: capacity = 2^15 = 32768 entries 2015-07-30 22:32:37,989 INFO org.apache.hadoop.hdfs.server.common.Storage: Lock on /usr/hadoop27/dfs-name/in_use.lock acquired by nodename 4165@larry-master 2015-07-30 22:32:38,245 INFO org.apache.hadoop.hdfs.server.namenode.FileJournalManager: Recovering unfinalized segments in /usr/hadoop27/dfs-name/current 2015-07-30 22:32:38,246 INFO org.apache.hadoop.hdfs.server.namenode.FSImage: No edit log streams selected. 2015-07-30 22:32:38,571 INFO org.apache.hadoop.hdfs.server.namenode.FSImageFormatPBINode: Loading 1 INodes. 2015-07-30 22:32:38,626 INFO org.apache.hadoop.hdfs.server.namenode.FSImageFormatProtobuf: Loaded FSImage in 0 seconds. 2015-07-30 22:32:38,626 INFO org.apache.hadoop.hdfs.server.namenode.FSImage: Loaded image for txid 0 from /usr/hadoop27/dfs-name/current/fsimage_0000000000000000000 2015-07-30 22:32:38,695 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Need to save fs image? false (staleImage=false, haEnabled=false, isRollingUpgrade=false) 2015-07-30 22:32:38,697 INFO org.apache.hadoop.hdfs.server.namenode.FSEditLog: Starting log segment at 1 2015-07-30 22:32:39,600 INFO org.apache.hadoop.hdfs.server.namenode.NameCache: initialized with 0 entries 0 lookups 2015-07-30 22:32:39,601 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Finished loading FSImage in 1885 msecs 2015-07-30 22:32:40,913 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: RPC server is binding to larry-master:9000 2015-07-30 22:32:40,937 INFO org.apache.hadoop.ipc.CallQueueManager: Using callQueue class java.util.concurrent.LinkedBlockingQueue 2015-07-30 22:32:40,976 INFO org.apache.hadoop.ipc.Server: Starting Socket Reader #1 for port 9000 2015-07-30 22:32:41,181 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Registered FSNamesystemState MBean 2015-07-30 22:32:41,632 INFO org.apache.hadoop.hdfs.server.namenode.LeaseManager: Number of blocks under construction: 0 2015-07-30 22:32:41,632 INFO org.apache.hadoop.hdfs.server.namenode.LeaseManager: Number of blocks under construction: 0 2015-07-30 22:32:41,632 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: initializing replication queues 2015-07-30 22:32:41,633 INFO org.apache.hadoop.hdfs.StateChange: STATE* Leaving safe mode after 5 secs 2015-07-30 22:32:41,633 INFO org.apache.hadoop.hdfs.StateChange: STATE* Network topology has 0 racks and 0 datanodes 2015-07-30 22:32:41,633 INFO org.apache.hadoop.hdfs.StateChange: STATE* UnderReplicatedBlocks has 0 blocks 2015-07-30 22:32:41,698 INFO org.apache.hadoop.hdfs.server.blockmanagement.DatanodeDescriptor: Number of failed storage changes from 0 to 0 2015-07-30 22:32:41,713 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: Total number of blocks = 0 2015-07-30 22:32:41,713 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: Number of invalid blocks = 0 2015-07-30 22:32:41,713 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: Number of under-replicated blocks = 0 2015-07-30 22:32:41,713 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: Number of over-replicated blocks = 0 2015-07-30 22:32:41,713 INFO org.apache.hadoop.hdfs.server.blockmanagement.BlockManager: Number of blocks being written = 0 2015-07-30 22:32:41,713 INFO org.apache.hadoop.hdfs.StateChange: STATE* Replication Queue initialization scan for invalid, over- and under-replicated blocks completed in 79 msec 2015-07-30 22:32:41,890 INFO org.apache.hadoop.ipc.Server: IPC Server Responder: starting 2015-07-30 22:32:41,894 INFO org.apache.hadoop.ipc.Server: IPC Server listener on 9000: starting 2015-07-30 22:32:41,900 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: NameNode RPC up at: larry-master/192.168.1.20:9000 2015-07-30 22:32:41,901 INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Starting services required for active state 2015-07-30 22:32:41,965 INFO org.apache.hadoop.hdfs.server.blockmanagement.CacheReplicationMonitor: Starting CacheReplicationMonitor with interval 30000 milliseconds 2015-07-30 22:38:53,420 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 3825ms GC pool 'Copy' had collection(s): count=1 time=3789ms 2015-07-30 22:39:06,685 INFO org.apache.hadoop.util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 6535ms GC pool 'Copy' had collection(s): count=1 time=216ms GC pool 'MarkSweepCompact' had collection(s): count=1 time=6548ms 2015-07-30 23:01:15,340 INFO org.apache.hadoop.hdfs.StateChange: BLOCK* registerDatanode: from DatanodeRegistration(192.168.1.22:50010, datanodeUuid=6ecfa028-8fa2-4e6f-af86-7ca789191b6b, infoPort=50075, infoSecurePort=0, ipcPort=50020, storageInfo=lv=-56;cid=CID-2282d5f8-13d8-4c38-96e8-b8c0864f68fb;nsid=1301589930;c=0) storage 6ecfa028-8fa2-4e6f-af86-7ca789191b6b 2015-07-30 23:01:15,350 INFO org.apache.hadoop.hdfs.server.blockmanagement.DatanodeDescriptor: Number of failed storage changes from 0 to 0 2015-07-30 23:01:15,352 INFO org.apache.hadoop.net.NetworkTopology: Adding a new node: /default-rack/192.168.1.22:50010 2015-07-30 23:01:15,996 INFO org.apache.hadoop.hdfs.server.blockmanagement.DatanodeDescriptor: Number of failed storage changes from 0 to 0 2015-07-30 23:01:15,996 INFO org.apache.hadoop.hdfs.server.blockmanagement.DatanodeDescriptor: Adding new storage ID DS-6bc7b957-921b-4b60-9b30-143badc08c80 for DN 192.168.1.22:50010 2015-07-30 23:01:16,245 INFO BlockStateChange: BLOCK* processReport: from storage DS-6bc7b957-921b-4b60-9b30-143badc08c80 node DatanodeRegistration(192.168.1.22:50010, datanodeUuid=6ecfa028-8fa2-4e6f-af86-7ca789191b6b, infoPort=50075, infoSecurePort=0, ipcPort=50020, storageInfo=lv=-56;cid=CID-2282d5f8-13d8-4c38-96e8-b8c0864f68fb;nsid=1301589930;c=0), blocks: 0, hasStaleStorage: false, processing time: 7 msecs 2015-07-30 23:05:55,139 ERROR org.apache.hadoop.hdfs.server.namenode.NameNode: RECEIVED SIGNAL 15: SIGTERM 2015-07-30 23:05:55,227 INFO org.apache.hadoop.hdfs.server.namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at larry-master/192.168.1.20 ************************************************************/ |

rancho 发表于 2015-8-1 01:43 谢谢,我试试,感觉很有可能是这个问题。 我要清空掉master和所有的slave的log信息吧 |

arsenduan 发表于 2015-7-31 09:46 错误就是图片4.png中提示的exiting with status 0. 谢谢 |

pig2 发表于 2015-8-1 22:15 多谢版主支持,我也是看了文章了,由于都是下班后学习,所以没有看视频(比较耗时) |

larry 发表于 2015-8-1 22:08 先会看日志 Hadoop日志位置在哪里?确切日志位置指定 http://www.aboutyun.com/thread-5856-1-1.html 建议先入个门,欢迎捐助 about云hadoop生态系统零基础入门【后续不断更新】 https://item.taobao.com/item.htm ... amp;id=520413355976 |

/2

/2